How To Stop The Blinking Animation When Facial Expressions Are Activated Vrchat

ComboGestureExpressions is an Unity Editor tool that lets you attach face expressions to hand gestures, and arrive react to other Avatars iii.0's features, including Contacts, PhysBones and OSC.

It is bundled with Visual Expressions Editor, an animation editor that lets y'all create face expressions with the help of previews.

> Download latest version…

- (Download on github.com)

- (Download on berth.pm)

With ComboGestureExpressions:

- PhysBones, Contacts and OSC can exist used to blend in face up expressions.

- The pressure on your controller triggers can exist used to animate your face.

- Attach multiple expressions on a single gesture by switching between different moods using the Expressions Carte du jour.

- Eyes volition no longer blink whenever the avatar has a face expression with eyes closed.

- Puppets and blend trees are integrated into the tool.

- Animations triggered by squeezing the controller trigger volition look shine to outside observers (see corrections).

(Well-nigh illustrations in this documentation employ Saneko avatar (さねこ) by ひゅうがなつ)

Installation for V1 users

The components used in ComboGestureExpressions V1 and V2 are the same. Yous volition non lose your work.

To install ComboGestureExpressions V2, ComboGestureExpressions V1 must exist removed past hand first if it is installed.

- Delete the

Avails/Hai/ComboGesturefolder. - Delete the

Avails/Hai/ExpressionsEditorbinder.

Y'all can now install ComboGestureExpressions.

Remove the preview dummy in the scene, they are no longer needed.

What's new in V2?

Discover out what's new in V2.

Having problems? Bring together my Discord Server

I will try to provide help on the #cge channel when I can.

Bring together the Invitation Discord (https://discord.gg/58fWAUTYF8).

Set up the prefab and open the CGE Editor

Add together the prefab to the scene located in Avails/Hai/ComboGesture/ComboGestureExpressions.prefab. Right-click on the newly inserted prefab and click Unpack prefab completely. Select the Default object which contains a Combo Gesture Activeness component, and then click the Open editor push button in the Inspector to open the CGE Editor.

Visualize your animation files and preview using AnimationViewer

Select your avatar which contains your Animator.

In the Animator component, click the vertical dots on the right ⋮ and click Haï AnimationViewer. You lot can now meet previews of your animations inside your Project view. Motion your scene photographic camera to better come across your avatar.

This can cost a little scrap of performance, so click the Actuate Viewer push to toggle it on and off.

For more information, see AnimationViewer manual

Create a new fix of face up expressions

To assign animations with paw gestures, Elevate and drib blitheness clips from your Project view to the slots in CGE Editor.

To make the CGE Editor display the previews, drag and driblet your avatar to the Preview setup field at the meridian.

Fill the starting time row completely. The first row contains the animations that play when one hand is doing a gesture, only the other paw is doing a gesture that doesn't exist (i.e. the 🤙 sign).

Then, yous can click the Auto-set button in the diagonal. The diagonal contains the animations that play when both hands are doing the aforementioned hand gesture. Feel gratis to cull another animation.

The empty slots in-between volition be filled later in this transmission, past using a tool to combine animations.

Gesture names for reference (VRChat documentation):

- No gesture / None: 🤙 (Neutral in VRChat docs)

- Fist: ✊

- Open up: ✋ (HandOpen in VRChat docs)

- Bespeak: ☝️ (FingerPoint in VRChat docs)

- Victory: ✌️

- RockNRoll: 🤘

- Gun: 🎯👈 (HandGun in VRChat docs)

- ThumbsUp: 👍

- …on both easily / x2: 🙌

Edit or create animations with Visual Expressions Editor

If you need to create a new face expression blitheness, click the Create button, or click the Visual Expressions Editor button on the top right.

Another manner to open this editor is to become in the Animator component, and then click the vertical dots on the right ⋮ and click Haï VisualExpressionsEditor. Move your scene camera to better see your avatar.

In the CGE Editor, at any point, you can click Regenerate all previews to refresh the previews.

For more information, head over to the Visual Expressions Editor documentation.

Combining hands

When your left paw and right hand are not making the aforementioned gesture, the animation in the corresponding slot will play.

You tin choose your own animation, or utilize a tool to combine the corresponding animations from the left and right manus by clicking the + Combine button.

When combining, you will run into a preview of the two animations mixed together. It is very common for the mixed animation to be conflicting, peculiarly when two animations animate the eyes or the mouth in a different way.

Click the buttons on either side to plow some properties on and off, until you find a face expression that makes sense for that combination of gesture. When satisfied with the result, click Save and assign in the middle.

You lot can choose to rename the animation using the field above the push.

It is highly recommended to make full out all slots.

Do not blink when eyes are airtight

Get to Prevent eyes blinking tab. By selecting which animations take both eyes closed, the blinking animation volition exist disabled as long as that confront expression is agile.

Information technology is non recommended selecting animations with only one eye airtight such equally winking, as this will likewise cause the avatar to stop eye contact.

In your animations, you should not animate the Blink blendshape which is used by the Avatars 3.0 descriptor. If yous do, your eyelids will not smoothly animate, and they will not animate on analog Fist gestures.

On many avatar bases, the left eyelid and right eyelid can be animated independently. I would advise y'all to breathing those ii blendshapes instead.

Apply to the avatar

Select the ComboGestureExpressions object of the prefab which contains a Combo Gesture Compiler component. In the inspector:

- Drag and drop your avatar in the

Avatar descriptorslot. The avatar volition non be modified, this is but required to read the default animation values of the avatar, and as well read the glimmer blendshapes in the Avatar Descriptor. - Elevate and drop your your FX playable layer animator to the

FX Animator Controllerslot. This asset will be modified: New layers and parameters volition exist added when synchronizing animations. I recommend yous to make backups of that FX Animator Controller!

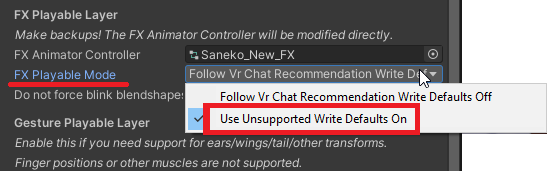

Depending on how your animator is congenital, choose the right setting in FX Playable Mode: Cull Write Defaults OFF if you are following VRChat recommendations. Try to be consequent throughout your animator.

If you lot apply MMD worlds that incorporate trip the light fantastic toe animations, please read the MMD worlds section later in this transmission.

Y'all should at present exist able to press Synchronize Animator FX layers, which will modify your animator controller.

Whenever you alter any face expression animation or anything related to ComboGestureExpressions, press that button again to synchronize.

If you haven't done information technology already, right-click on the newly created prefab and click Unpack prefab completely.

Squeezing the trigger

In VRChat, to play the hand animations, brand a fist with your hand, and squeeze the trigger. The animation volition be blended in the more your printing the trigger.

The gesture of the other hand is used as the base blitheness. For instance, if y'all are making Point + Fist gesture:

- The

Point + Fistblitheness will be used when the trigger is squeezed. - The

Pointanimation will exist used when the trigger is non squeezed.

When both easily of your manus are making a fist, you lot can select two boosted animations to play:

- The

Fist X2animation will be used when both triggers are squeezed. - The

Fist X2, LEFT triggerblitheness volition exist used when the left trigger is squeezed. - The

Fist X2, Correct triggeranimation will exist used when the right trigger is squeezed. - The

No gestureanimation will be used when none of the triggers are squeezed.

Illustration of blitheness blending in an Analog Fist gesture.

In your animations, you should not animate the Glimmer blendshape which is used by the Avatars iii.0 descriptor. They will non breathing on analog Fist gestures.

On many avatar bases, the left eyelid and correct eyelid tin be animated independently. I would suggest you to breathing those two blendshapes instead.

To make your face expression react to interactions and other Dynamics, add the prefab to the scene located in Assets/Hai/ComboGesture/CGEDynamics.prefab. Right-click on the newly inserted prefab and click Unpack prefab completely.

This component lets you define Dynamics. Press the + push to add a new element in the list.

In the Compiler, define your Master Dynamics object above the Mood sets.

You can individually define Dynamics objects in each Mood set, which volition just employ information technology to that Mood set. Brand sure you don't have duplicate within your Main Dynamics object!

Analogy of a Dynamics contact.

Select the Dynamic Expression

Select the effect. It can exist either a Clip or a Mood set.

After choosing a clip, check the box if both eyes are airtight.

For more data nearly Mood sets, see the Mood sets section later in the manual.

Using a PhysBone as the Dynamic Condition

To utilize a PhysBone:

- Configure your PhysBone to have a parameter (see VRChat documentation)

- Define the Source to be PhysBone

- Ascertain the PhysBone object

- Define the Physbone Source to be either Stretch, Bending, or IsGrabbed

The animation will behave differently depending on the PhysBone source:

- If it's Stretch (which is a Bladder value), the animation will exist blended in by how much information technology is stretched. Information technology is strongest when fully stretched.

- Yous can change the Upper spring value to a lower value to make it strongest before existence fully stretched. A value of 0.75 would make the animation strongest when the Contact is 75% stetched.

- If information technology's Angle (which is a Float value), the animation will be blended in past how much the os is abroad from the rest position. It is strongest when angled at 180 degrees abroad from the residuum position.

- You lot can change the Upper leap value to a lower value to make it strongest at an bending smaller than 180 degrees. A value of 0.75 would make the animation strongest when the bending is 135 degrees (0.75 * 180deg).

- If information technology'due south IsGrabbed, the animation will play when the PhysBone is grabbed.

Behavior with multiple Dynamic Expressions

The order of Dynamics in this listing matters: When ii or more Dynamic Conditions are agile, the ane which is college in the list has priority.

To utilize a Contact Receiver:

- Configure your Contact Receiver (see VRChat documentation)

- Define the Source to be Contact

- Ascertain the Contact Receiver object

The animation will behave differently depending on the kind of Contact Receiver:

- If it'south Proximity (which is a Float value), the animation will be blended in by the Proximity corporeality. It is strongest when fully colliding.

- You can change the Upper spring value to a lower value to go far strongest earlier fully colliding. A value of 0.75 would make the animation strongest when the Contact is 75% colliding.

- If it'south Constant, the animation will play when the Contact being collided with.

- If it's OnEnter, the animation volition play for a specified duration the moment the Contact is entered above the velocity. Additional options will testify up:

- Set the animation duration.

- If necessary, tweak the curve. The animation will exist blended by this bend over the blitheness duration. Here are some examples of how to use it:

- Tweak the enter transition duration of the expression.

- Note: Every bit of version V2.0.0, due to a limitation, you tin merely have one bend per Contact of type OnEnter. In addition, Dynamic Expressions priorities work slighly differently as a lower priority OnEnter Contact may exist able to take over a high priority OnEnter Contact. This may alter in time to come versions.

Using OSC or Avatars 3.0 parameter as the Dynamic Condition

To use a OSC or Avatars iii.0 parameter as the Dynamic Status:

- Add together the parameter in your avatar Expressions Parameters, if it's non a born Avatars iii.0 parameter.

- Define the Parameter Proper name

- Cull your Parameter type

The animation will behave differently depending on the Parameter type:

- If it's a Float, the animation will exist blended in the closest the value gets to 1.

- If it's a Bool or Int, the animation volition play when the Bool is true or when the Int value is strictly higher up 0.

- If you check Behaves similar OnEnter, the animation volition behave every bit if this parameter was a Contact of type OnEnter.

Other options

Dynamics condition with a parameter of type Int, Bool will not alloy in.

If it's a Float, it will blend in unless the Hard Threshold option is checked, in which case it will bear like a Bool.

In all of these cases, the dynamic element volition be agile when the threshold is passed.

For a boolean, IsAboveThreshold means the element is active when it's true.

Using multiple mood sets

(A longer tutorial with audio commentary is available)

Earlier, you set up confront expressions inside Default object of the prefab. This is the default mood set up of confront expressions of your avatar that is active later on loading. However, you can take whatsoever number of mood sets and switch between them using the menu to increase the number of face expressions depending on the state of affairs.

The prefab contains another object chosen Smiling as an example, which contains a separate Combo Gesture Activeness component. Select that object and rename it; Information technology is up to you to organize the mood sets the manner yous want it (Grin, Sad, Eccentric, Drunk, Romantic, …) and it does non necessarily have to be moods (Sign Language, Ane-handed, Conversation, Dancing, …)

Select the ComboGestureExpressions object of the prefab. In the inspector, set a Parameter Proper noun to that new mood set, leaving the offset 1 blank. The blank mood ready volition be the default mood set up that is agile when you load your avatar for the first time, or when you lot deselect a mood set.

In your Expression Parameters, add a new Parameter of type Bool.

In your Expression Menu, create a Toggle to control that Parameter.

Add additional mood sets by clicking + on the list, then drag-and-drop or select another ComboGestureActivity component. Just like the second i, choose another Parameter Name for that mood set up, create an Expression Parameter and a Expression Bill of fare toggle.

It is not necessary, you can optionally add a Parameter Name to the bare mood prepare. In that example, the first mood set in the list volition be default mood set up. This will let you to add together a toggle control to the default mood set up in order to have an icon for information technology.

Standalone puppets and blend trees

(A longer tutorial with audio commentary is available)

So far we have prepare Activity mood sets. Another blazon of mood set is bachelor: Puppet, which can be controlled by an Expression Menu.

The prefab contains an object called Puppet which contains a Philharmonic Gesture Puppet component. Select it and click the Open editor button in the Inspector.

To make the CGE Editor display the previews, drag and drib your avatar to the Preview setup field at the top.

Create a alloy tree using the tool. Select ane of the following basic templates: Iv directions, Eight directions, Half dozen directions pointing forward, Vi directions pointing sideways.

In Joystick center blitheness, add an animation that will be used when the joystick of the puppet menu is resting at the center. Click Create a new blend tree nugget to select a location where to save that blend tree.

There are two additional options when generating the blend tree that should be left at their default values:

- Fix joystick snapping creates 4 additional animations for the resting pose virtually the center. This is considering joystick of VR controllers have a dead zone in the middle. This means the blitheness will snap when exiting that dead zone.

- Joystick maximum tilt brings the outer animation points slightly closer to the middle. This is because joystick of VR controllers can non e'er be tilted all the way in every direction. This can also be used to avert tilting the joystick all the way.

After generating the alloy tree, edit it in the inspector to assign the confront expressions in it. After it is done, select which face expressions have optics airtight by going to Forbid eyes blinking tab.

Select the ComboGestureExpressions object of the prefab. In the inspector, add together a mood set past clicking + on the list. On the left in the dropdown menu, switch from Action to Boob, then drag-and-drop or select the Puppet object. Just like Activeness mood sets, you can create more Puppet mood sets by creating additional ComboGesturePuppet components.

I recommend creating two controls in your Expression Carte to control the puppet: A Toggle control to switch to the Puppet mood set, and separate Two-Axis Puppet to control the two parameters of your alloy tree.

Illustration of a puppet mood ready.

Breathing cat ears, wings and more

(A longer tutorial with audio commentary is available)

In Avatars 3.0, animations that modify transforms belong in the Gesture playable layer. In face up expression animations, this is well-nigh often used to animate ears, wings, tails…

Skip this step if you do non have such animations. You should only enable Gesture Playable Layer Support if you lot exercise animate those in your face expressions animations.

Notation that finger poses and humanoid muscle poses will be ignored by this process. Animating finger poses is washed past modifying the Gesture layers, which is outside the scope of this documentation.

If you exercise not accept a gesture layer, indistinguishable one of the VRChat SDK examples and assign to the Gesture playable layer of your avatar:

-

Assets/VRCSDK/Examples3/Animation/Controllers/vrc_AvatarV3HandsLayer2for feminine hand poses, -

Assets/VRCSDK/Examples3/Blitheness/Controllers/vrc_AvatarV3HandsLayerfor masculine hand poses.

Select the ComboGestureExpressions object of the prefab. In the inspector, tick the Gesture playable layer support checkbox, and assign your Gesture playable layer animator to the Gesture Animator Controller slot. This asset will be modified: New layers and parameters volition exist added when synchronizing animations. I recommend yous to make backups of a that Gesture Animator Controller!

Depending on how your animator is congenital, cull the correct setting in Gesture Playable Fashion: Choose Write Defaults OFF if you are following VRChat recommendations. Try to be consistent throughout your animator.

Handling the Gesture Playable is very tricky, and extra precautions need to be taken:

- You will see a cherry-red warning regarding Avatar Masks if ComboGestureExpressions detects that your FX Playable Layer may be incompatible with your Gesture Playable Layer, in which example it will suggest you a fix. If that's the case, click Add missing masks. This volition add a mask to the layers of your FX Playable Layer that practise not however have an Avatar mask.

- If you add together new layers to the FX Playable Layer, you may have to click Add missing masks if you see the reddish warning over again.

- If yous alter the FX Playable Layer, and Synchronize Animator FX and Gesture layers every fourth dimension yous practise a change in the FX Playable Layer. That is because the mask is generated based on the animations within the FX Playable layer.

- You should not share your Gesture Playable Layer between two very different avatars that do not have the aforementioned base, because the avatar is being used to capture the default bone positions of the avatar when it is at residuum, and so that blithe transforms can reset to a base position when they are not being used.

If you would like to know why an Avatar mask is needed on layers of the FX Playable Layer, you may find additional data here.

Permutations

(A longer tutorial with sound commentary is available)

For simplicity purposes, nosotros've been using combinations of gestures, meaning that Left Point + Right ThumbsUp will bear witness the same animation equally Left ThumbsUp + Right Point. I encourage yous using multiple mood sets available in an Expressions carte to expand your expressions repertoire.

If you would similar to create permutations of gestures, which I do recommend for asymmetric face expressions or hand-specific Fist animations, modify the Mode dropdown at the top left and select Permutations . Y'all will meet a colored table split betwixt Left hand permutations (colored in orangish) and Right manus permutations (colored in blueish).

When this mode is selected in the dropdown, the Activeness will behave as if everything was still a combo: If you don't define a Left hand permutation, the Right paw permutation animation volition be used for both.

Mix puppets and gestures

(A longer tutorial with audio commentary is available)

Any blitheness slot can have a blend tree within it instead. This ways puppeteering is possible for specific combos of hand gestures.

Analog Fist gesture can exist completely customized using it, and information technology is fifty-fifty possible to simultaneously combine the Fist analog trigger with a boob menu if you feel similar it. Retrieve puppets retain their values when closing the menu, so you don't necessarily demand to take your boob card opened.

The blend tree template generator tin be accessed in Additional editors > Create blend trees tab. For puppet menus, employ the previously mentioned templates. For Fist gestures, select ane of the following templates: Unmarried analog fist with pilus trigger, Single analog fist and ii directions, Dual analog fist.

When placing a alloy tree in a single Fist gesture, the parameter _AutoGestureWeight will exist how much the trigger is squeezed.

When placing a alloy tree in the Fist x2 slot, the parameters GestureLeftWeight and GestureRightWeight will exist how much the left and right triggers are squeezed respectively.

MMD worlds compatibility option

MMD worlds are particular in regards to face expressions. If you are a regular user of MMD worlds and demand your avatar to be compatible, the avatar needs to be fix in a specific way in order to get face expressions working. If you don't employ MMD worlds, don't bother with this!.

For MMD worlds specifically, using Write Defaults ON is recommended.

In the Compiler at the bottom, define the field called MMD compatibility toggle parameter. Create an Expressions Card toggling that parameter.

In the FX Playable Layer settings, fix the FX Playable Mode to Utilise Unsupported Write Defaults On. Practise the same for the Gesture Playable Layer if you take one.

When the parameter is active, the face expressions of your avatar will cease playing whenever your avatar is in a chair or a station. Stations are what MMD worlds employ to play animations on the avatar.

It is possible to use Write Defaults OFF with a big caveat: you won't be able to use the FX layer, therefore no toggles or other avatar gimmicks. CGE's MMD worlds compatibility will non be able to help you lot with this.

Learn more than

- Corrections - Learn about the various techniques used to fix animations.

- Integrator - Documentation nearly the Integrator, a module to add Weight Corrections without using ComboGestureExpressions.

- Write Defaults - Explanation of how the Avatar Mask is built.

- Download on github.com - Main download location.

- Download on booth.pm - Alternate download location.

Nearly illustrations in this documentation utilise Saneko avatar (さねこ) by ひゅうがなつ

Source: https://hai-vr.github.io/combo-gesture-expressions-av3/

Posted by: youngthadders.blogspot.com

0 Response to "How To Stop The Blinking Animation When Facial Expressions Are Activated Vrchat"

Post a Comment